How managing ML-enabled products requires you to think differently

Adjusting to think in terms of probabilities and using data to identify problems

Happy 2023, the year of the Rabbit. I took about a month off from writing over the holidays. Reflecting on my writing these past two years, I’ve decided to adjust the cadence of my substack from weekly to monthly. Why? I’ve written about all the topics I wanted to cover. Could I can churn out more content for the sake of content? Sure, but that’s neither enjoyable to me nor beneficial to you. As always, finding a niche that sustains an interested audience that also feeds a writer is a delicate balance. Anyways, drop a comment if there are topics you wish I covered.

Over the last six months, I’ve been working on a chatbot product. A modern chatbot is an excellent example of a ML-enabled product, which I define as a product that applies machine learning models for the product to delivery value. ML-enabled products are a specific sub-category of data-enabled products.

Compare that to the product I’m using for my newsletter: Substack. Substack is powered by user-generated content, but you don’t need to have pre-generated content before obtaining value from Substack, especially if you’re a writer. You sign up and start writing, creating the content that’s the value to readers. As the writer, you gain value by using Substack to manage writing, subscription, and delivery.

But with ML-enabled products you need data in the first iteration to deliver value. Nowadays, some of the ML & data needs have been reduced by the availability of “off-the-shelf” models or publically available data. This is analogous to the availability of open APIs. These public models and data reduces the effort to build or gather your own data, but it isn’t zero.

In working on a ML-enabled product, I noticed a change in my way of thinking. Specifically, I noticed I had to discard some of my functional-oriented, deterministic techniques I’d learned as a PM. What does this mean?

Functional-oriented Product Management

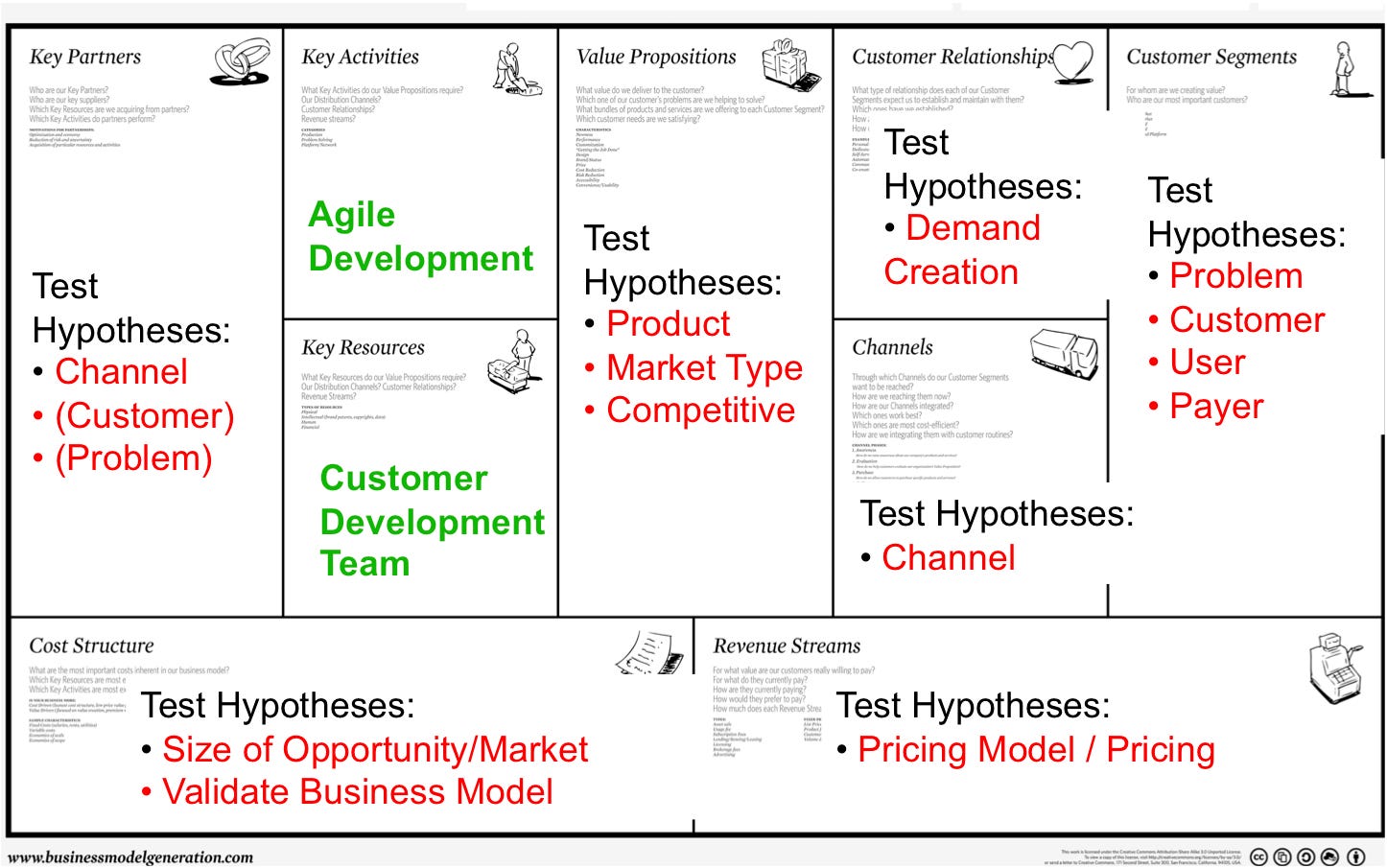

For many product managers, we start our careers thinking about user problems, value props, and features. Using tools like the Business Model Canvas, we develop hypotheses of value propositions, which we then try to provide via product features that are targeted for specific customers.

We deploy customer interviews and observations to uncover problems that we then try to solve. We mock up customer journeys, story-maps, service blueprints that explain in sequential order the happy state, future state, desired vision. [Ironic isn’t it that we have so many ways of saying the same thing.]

In all these cases, our thinking is usually a complex version of Given A, When B, Then C. Having honed my skills in these techniques, transitioning to an ML-enabled product threw me a curve ball because it requires you to think in terms of probabilities.

When the techniques you’ve learned become less effective

It turns out that some of the traditional tools and techniques, they’re just less effective. This isn’t obvious at first, but let me give an example.

Take customer interviews. Let’s say from your interviews, you conclude that customers have trouble finding certain information with standard UI-based navigation. You hypothesize a search could answer questions and present information, so propose it as a solution. You might then design some user journey, maybe you’ll even go really deep and design a various search queries/re-queries.

You’re first problem occurs in that it’s very difficult to model all search options. Human conversation are so varied. Think of a simple conversation such as order food. The possible questions and variations are generally too numerous to all model. Then, even if you could model them, managing those sequences is also too difficult.

This is where data is vital, not only in formulating your initial dataset, but in developing the ML model that will be used to deliver the solution. If you didn’t have this data, meaning if you didn’t have users performing search already in some fashion, it’s very difficult to build a search product. In short, the availability of data is often a prerequisit to even determine if you can solve the problem. This was something I mentioned in “Stakeholder management series: Tips for working with data science”

This focus on data is not necessary for most functional products.

Okay, so you need data, what’s the big deal? Don’t we always use data?

Not really. Most of our product decisions are what I’d call, data-informed. Actually, in many cases, we make product decisions when we have insufficient data. Making decisions with a limited set of data is actually an important skill for PMs. But this skill comes to bite you in the ass for ML-enabled products.

An ML-enabled product will deliver value if the model correctly predicts or decides the right outcome. Thus value is binary from the user’s perspective. But we know the model is probablistic, which means the value of the product to the user is probablistic.

That’s very different than most functional-oriented products. Imagine if the submit button for a form had only an 80% chance of making the upload work. You’d say it’s broken. Yet ML-enabled products often have probabilistic rates significantly lower than 80% and 80% is considered good.

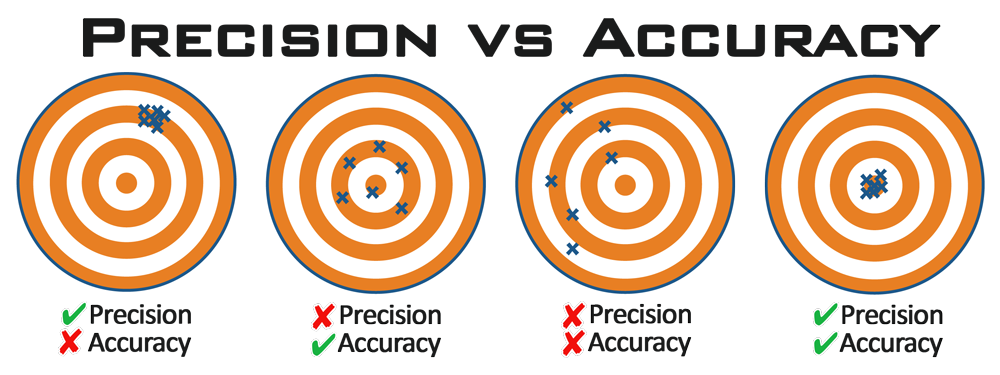

What this means is that with ML-enabled products, often, you need to work backwards from the data. It isn’t that understanding user problems aren’t important. But you have to start with the quality of your data and to figure out, what can it predict with high accuracy and precision. This is the only way to consistently deliver value.

Changing from deterministic to probablistic thinking

The other challenge with ML enabled products is that experiences can have both probabilistic and deterministic characteristics and observing the differences between the two can be subtle in your feature

Let’s start with understanding deterministic, which is what we’re familiar with. Build a “Next” button and when the user clicks on it, it’ll go to the next page. It should always do this. Yes, we can complicate the situation with error handling and multi-conditions, but there is a defined next page (or manageable set of pages).

ML-enabled products don’t have or can’t have such a clearly defined next page. If it could, you probably don’t need to apply ML and you’ve over-engineered your product.

Take a common ML-enabled product such as a product recommender, say for a credit card. You enter data, click submit, and get a result. It feels like the deterministic because you get a next page result, but the specific result you obtain (assuming there are thousands of options) is probabilistic. The exact product recommended isn’t pre-determined. If it was pre-determined, you can use a complex set of conditions (i.e., rules) and not a ML model.

This aspect of combining deterministic (i.e., you should always get a result) with a probability (i.e., the exact result you will see ), makes managing and thinking about ML-enabled products different.

For example, chatbots often require ML models to infer from your typing what you are trying to do. This is probabilistic. However, once it’s made that inference, the answer the chatbot provides is deterministic (at least in most servicing chatbots). You don’t want the chatbot to make up answers that are untrue. This is why creative chatbots are cool concepts, but problematic in practice for many businesses. Can you imagine a medical chatbot giving answers to treatments by making up the treatment content on the fly from a corpus of medical treatment data?

Tell me more…I need another example.

Okay, let’s delve deeper using the recommender product example. Say that you believe, that certain users would benefit if we recommended them specifically product A. You could:

a) Hard code a rule, which by-passes the ML recommender

b) Tweak the ML model so that parameters overindex to product A given the inputs

Which is the right answer? … It dependents because each has its own set of problems. If you take route A, and assuming it works, it becomes difficult to manage over time when you add more and more rules. So this works in a world where rules are finite. If that’s not your world, then you will be in trouble.

Alternatively, if you take route B, tweaking the model itself has implications on the model across all other products. If A is recommended more, you’ll have to determine what will be recommended less by the model. That’s not obvious and there might be multiple impacts, all difficult to foresee until you (or more like, the data scientist working with you) tries. This “trying” is not only time intensive, but sometimes results in the data scientists coming back and telling you that this type of tweaking has too many negative side effects (i.e., it hurt the recommendation of every other product).

Case in point with our chatbot. If we try to make a chatbot more effective at understanding A, it might understand B less or “decrease our accuracy in predicting B. This means, in our attempt to satisfy the functional needs of A, we’ve hurt B. That type of problem usually doesn’t present itself so obviously in functional-oriented product management.

Now that you know, do others know

If you’ve read this far and are nodding your head along, thanks. But this isn’t the end of the challenge. The other challenge is that ML-enable products requires working with others practitioners (engineers, designers, executives, data scientistcs) who understand the challenges of ML, but can also adapt their own functions to that challenge, in a cross-functional, collaborative setting. In many industry, you don’t have sufficient quorum of knowledge or awareness. This can be even more problematic for individuals in positions of authority. They’ve become successful in their positions operating with tools and techniques that worked for non-ML-enabled products, and now, it’s not working. It’s difficult to re-adjust suddenly, when the stakes are even higher. You might have a case of Lions led by donkeys.

Additional Reading

How to Manage Machine Learning Products (decent overview if you’ve never worked on ML products, specifically the difference between a ML product and a product enabled/applying ML technology)

Accuracy is just the tip of the iceberg: A Data-centric vs. User-centric Evaluation (really interesting paper that talks about user satisfaction, beyond accuracy of the model)