I'm thinking about software testing wrong.

When it comes quality and testing, how do product managers setup themselves and their product for success.

Let’s paint a picture. You’re a PM working at a company, less than 100 people. Maybe the company has 1 or 2 manual software testers. Maybe there’s some automated tests, either unit tests or end-to-end tests, but those tests don’t cover all your core features and functionality. With an attitude of “PMs should step in to fill the gaps”, you’re performing manual testing to catch issues before each release. In this world, how should you balance testing against other demands of your job?

What is software testing and product acceptance?

As part of product delivery, the purpose of software testing and product acceptance is to validate the software performs as expected.

Why PMs care about software testing

Two reasons:

Validating the software “works” ensures the feature will deliver the expected value for your customers. It’s hard to create value if it doesn’t work.

Fixing a production bug after the software is released is 2x-3x more work. You want to avoid that. That’s time you could be spending elsewhere.

#1 is obvious. It’s more rare for software released with glaring issues but not uncommon. Read about Cyberpunk 2077's long list of hotfixes and patches post release.

#2. We all know that fixing something after the fact requires more work. You’ll have to manage dissatisfied customers, communicate with customer support, migrate data, remove features, etc. It’s generally better to measure twice and cut once.

Steps to improve quality while refocusing your time away from manual software testing.

Building a culture of quality starts with understanding how your company values and rewards quality. Matt Greenberg, CTO of Reforge and ex-VP of Engineering of Credit Karma said it best.

I think that people underestimate the work that goes into good quality software. Culture takes a lot of work to build. It's also a different thing to do things fast vs good and sometimes you need to be able to do fast instead of good. So you sort of need to know what model you are working in, what you are optimizing for, and how to build or change the culture to support that.

As a leader, what you measure and reward is what you'll get back. If you reward the fast and loose engineers who ship the most code but leave lots of quality issues, you'll get a lot of that. If you reward the person who is methodical and does TDD and works to make sure the spirit of the function is complete, you'll get a lot of that.

Ask yourself, what does your company value and reward? Think from the executive team’s perspective, not as an individual product manager.

My work experience and the experience of another tells me that most startups focus on speed and output instead of quality. A startup will continue to focus on speed and output until it has achieved an acceptable, sustained and repeatable growth rate. This is because speed and output is easier to measure and reward than quality. Furthermore, there’s a common mentality that speed of output allows the company to take more shots in solving a problem within a given time. Once the company or product finds sustainable growth, the company then shifts towards quality.

Document expected “happy path” behavior using a GIVEN, WHEN, THEN. Even if your company is optimizing for speed, you should still do your part as a PM by defining the expected feature’s behavior (aka acceptance criteria). This should be in writing so others have the same understanding. If it’s unclear, how will you or anyone else know how to test to ensure the feature will deliver the expected value?

Using the GIVEN, WHEN, THEN format is an easy way to write acceptance criteria.

GIVEN <Situation, something the user has done, something has occurred>

WHEN <User does something, time passes, another user does something>

THEN <action that should occur, outcome>Use a checklist to help you write better GIVEN, WHEN, THEN. It’s hard to think through situations so here’s four areas to think about when writing GIVEN, WHEN, THEN.

Response time (i.e., speed of a response). Is there a specific time requirement for how quickly the “THEN” needs to respond? Don’t make up an arbitrary time if there is no specific need to just say, “as fast as possible”.

Device (i.e., any specific device variations). Are there different situations for different devices or browsers when writing the “GIVEN” statement?

Security (i.e., data and privacy). Are there specific data that must be hidden, encrypted, deleted, audited, retained, or not displayed in the THEN statement?

Volume (i.e., load). Is there a known volume of request that will happen, concurrently or over a period of time? Often, this is unknown day 1 so it’s okay to ignore it if you have no information. Don’t over engineer for volume that may not materialize.

Focus on the happy case, not creating all possible cases. Remember, your job is not to be the manual tester, even if that’s a portion of your current role. So, don’t create a separate spreadsheet to track test cases of all GIVEN, WHEN, THEN scenarios. Keep what you write to the most common happy case scenarios and include it in the user story.

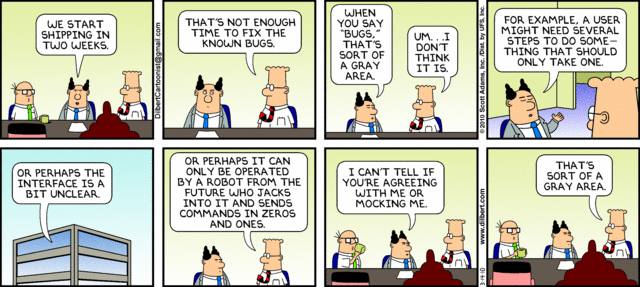

Empower others to stop work by defining acceptable quality. There are two approaches to quality.

The first approach is to assign a single person or particular function to own quality. This could be a dedicated SWE-Test, the engineering function, yourself, product management, etc.. While it’s good in theory, I’ve never seen it work once the organization grows past 25 people. At scale, there are too many people and processes involved when building something to make it possible for a single person or function to ensure quality without a lot of conflicts. Instead, pushing quality into a single individual or function usually makes quality control a reactive role: check for quality after something is built and lots of disappointments after the fact.

The alternative approach is to empower everyone to own quality. But how do you empower everyone without empowering no one? You start by defining the level of quality that’s acceptable. Here’s that in practice:

Quality of a product or feature is it’s output. But what does that output quality mean? For example, what’s an acceptable visual quality? What’s an acceptable functional quality? Does having documentation available as part of a release part of quality standards. Pick an area to explore.

Take that area and define what acceptance is. How will you know something is an acceptable quality given your current resources and skills? For some, you might be able to quantify explicitly “No more than 2 known bugs per release, regardless of bug priority.” It’s important not to get caught up in how to achieve the standards or set the “best standards”. Aim for great, not perfection.

Once you have a few definitions of quality and how you’ll measure them, you have a baseline and a shared language for quality. Until you can articulate what good quality looks like for your product/feature, it is not possible to formulate any plan around upholding this level of quality.

Now, empower people to call “stop” when the quality of the product is deviating from this baseline. This means products or features won’t be released. This follows from manufacturing.

Finally, you must identify and reward behaviors where someone speaks up and says “Stop, we can’t release this because it’s against baseline quality standards”. This is why I said early, you have to define what's an acceptable level of quality and aim for greatness, not perfection. Common conflicts at this point are:

“We must release because XYZ / Just this one time”: This goes back to point 0, company culture. Perhaps the intention is to focus on quality, but the reality is the company is focused on speed and output. Alternatively, you might have set the baseline quality level too high given the resources and time constraints. Either lower your quality standards or re-evaluate if this entire exercise isn’t the right time for what the company is trying to optimize.

“This isn’t good enough”: This is when someone wants a higher quality than the agreed upon baseline. While that’s commendable, if the higher quality is more than the current agreed upon baseline, you should be comfortable releasing/moving forward for now. Separately, you can always revisit the baseline standards to further improve quality.

Final comment

Some people who read the above will feel what I’m recommending is all wrong. If you’ve ever read about the Toyota Way, you’ll say everything I’ve written about improving quality is wrong.

And I agree with you, I’m wrong. What I recommend are band aids to assist companies/products where speed/output are valued over quality and you’re looking to transition to some more standard quality product releases. Unless the executive leadership focuses on long-term and values quality, I don’t believe most PMs will be able to institute fundamental changes. Leave a comment below is you disagree.

Additional reading: